Network reliability engineering demands specific capabilities from your network tooling and the simplicity to be had from a declarative set of tooling makes life so much more pleasant. It’s that feeling when you’re watching the sun set over a blue ocean, sitting on golden sand, drinking fresh pressed mango juice. You know the feeling. It feels like life has taken care of events to allow you to be there and become one with what you’re melting into. By the time you’ve read this post, the hope is that you, too, can enjoy sipping on cool juice whilst watching a glorious day come to a close.

What’s Different?

NRE != Automated Networks, just the same as a fast car != driving fast. To increase reliability, we have to think about the approach in depth. If we want to make a change and ensure what we want has happened how we want it, we need an observability layer, verification and validation along with forward and backward planning for resource management. In real life, this equates to:

1. Measuring enough states to quantify and validate our desired state or measure a change (modern telemetry systems)

2. Act upon changes from step one and trigger open or closed workflows in response (classic event-driven automation)

3. Generate backward and forward impulse changes to state

Declarative automation falls squarely in the realms of point three. A great declarative toolset lends the ability to plan, apply and remove resources, without focussing on network entities as wizardry. You still require baseline knowledge of the requirements and also the understanding of device limits. Automation of this nature typically works on infinite 1 cloud resources; therefore, some tracking of resource consumption is recommended for the purposes of reliability.

Boiling this down, cloud resources as well as being infinite are also treated as immutable, as in, we create and destroy, but not necessarily modify once the infrastructure is created. Immutable infrastructure2 is usually treated as “build and throw away,” as opposed to build and maintain.

Terraform

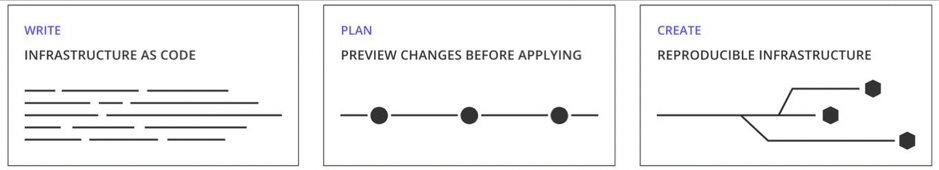

Terraform is one tool that stands out in the IT automation world for its declarative automation capabilities.

A graph system lies at the very heart of this tool, enabling Terraform to understand what must happen to make the resources come to life and what needs to be undone for them to be removed. It features a plugin system for extensibility and its own configuration language called Hashicorp Configuration Language3 (HCL).

Select Junos resources can be represented in HCL code, which Terraform then creates and orders using a NETCONF session down to the target device. Just declare what it is you want and Terraform takes care of it. When it comes to payload verification, after a Terraform apply operation, a configuration read against Junos ensures the control plane contains the resource information that Terraform previously requested. It means, even at this level, we can reduce the work involved to automate by removing the need for configuration unit tests. If Terraform’s local state doesn’t match up with the remote state, Terraform delivers the results to inform you so. Additionally, Terraform takes care of forward and backward impulse changes, so both can apply the desired state and remove it just as easily. Thus, we don’t worry about how to remove the state, we just have to ask Terraform to remove it for us.

Terraform itself is a deep tool and offers wondrous capabilities like graph generation and tainting. This post is an introduction to Terraform with Junos and I implore you to read up on Terraform and HCL to understand much deeper.

Workflows

When it comes to HCL, these resource files can be contained in GitOps style workflows, meaning the state is portable and reproduceable. Through the power of configuration sourcing, we can drive the infrastructure to a previous known state under failure conditions. Considering these are highly complex environments with high rates of change, we may desire a macro-state that previous configuration backups cannot offer without some tweaks. This is the power of configuration sourcing.

Terraform also features workflow-friendly controls like localized locking, which prevents Terraform being used simultaneously in the same set of directories. Different Terraform backends can also be configured, meaning we can store state on remove systems to prevent local state from being accidentally or forcefully deleted.

Finite Resources

Hardware platforms across any network vendors’ portfolio offer finite resources, like a fixed number of ports or a fixed number of VRFs for customer connectivity. Cloud providers aren’t typically the same. You can repeatedly ask for VMs without any real fear of running dry. With any form of automation, it’s important to track resource consumption externally from the toolchain and feed in variables or data sources so that the finite resource consumption can be controlled properly. Terraform can operate with either form, making resource management easy.

Join Us and Learn MoreIn Part 5 of our automation webinar series, we discuss Declarative Network Automation and how it can help you drink more cool mango juice while also helping your organization. View the rest of the webinar series here.

Terraform removes complexity from imperative tasks, resulting in simpler and easily understood demands that can them be inserted into orchestrated automations. Creation and apply steps are just a keyword difference, and you can rest assured through resource dependency management and simplified knowledge, all of the components to stand up a service are dealt with.

For more information, check out the Terraform lesson on NRE labs and learn how to make use of the Terraform providers that we’re working on.

- Cloud resources are typically bound to financial constraints and rarely physical

- Immutable infrastructure

- HCL1 is used up to Terraform v0.11 and HCL2 is used from v0.12 and beyond